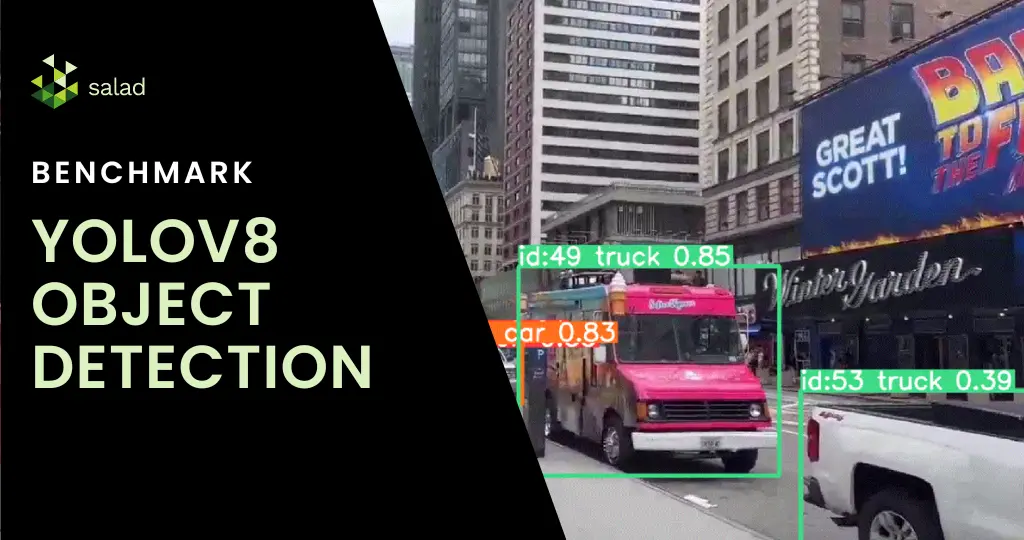

What is YOLOv8?

In the fast-evolving world of AI, object detection has made remarkable strides, epitomized by YOLOv8. YOLO (You Only Look Once) is an object detection and image segmentation model launched in 2015, and YOLOv8s is the latest version, which was developed by Ultralytics. The algorithm is not just about recognizing objects; it’s about doing so in real time with unparalleled precision and speed. From monitoring fast-paced sports events to overseeing production lines, YOLOv8 is transforming how we see and interact with moving images. With features like spatial attention, feature fusion, and context aggregation modules, YOLOv8 is being used extensively in agriculture, healthcare, and manufacturing, among others. In this YOLOv8 benchmark, we compare the cost of running YOLO on SaladCloud and Azure.

Running object detection on SaladCloud’s GPUs: A fantastic combination

YOLOv8 can be run on GPUs as long as they have enough memory and support CUDA. But with the GPU shortage and high cost, you need GPUs rented at affordable prices to make the economics work. SaladCloud’s network of 10,000+ Nvidia consumer GPUs has the lowest prices in the market and is a perfect fit for YOLOv8.

Deploying YOLOv8 on SaladCloud democratizes high-end object detection, offering it a scalable, cost-effective cloud platform for mainstream use. With GPUs starting at $0.02/hour, SaladCloud offers businesses and developers an affordable, scalable solution for sophisticated object detection at scale.

A deep dive into live stream video analysis with YOLOv8

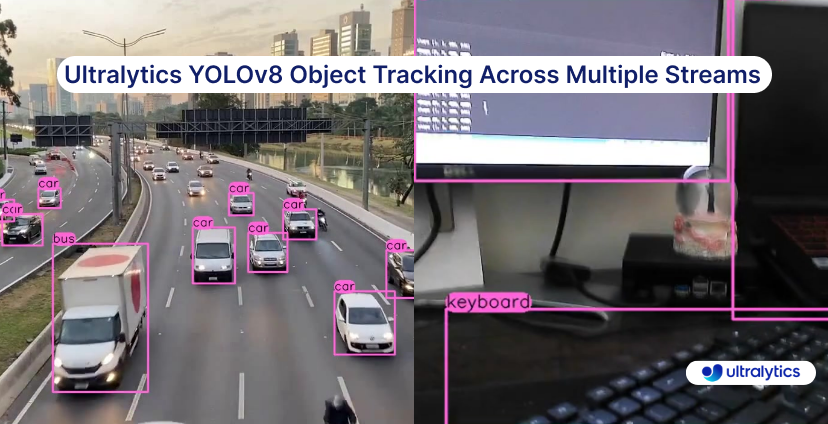

This benchmark harnesses YOLOv8 to analyze not only pre-recorded but also live video streams. The process begins by capturing a live stream link, followed by real-time object detection and tracking.

Using GPU’s on Saladcloud, we can process each video frame in less then 10 milliseconds, which is 10 times faster then using a CPU.

Each frame’s data is meticulously compiled, yielding a detailed dataset that provides timestamps, classifications, and other critical metadata. As a result, we get a nice summary of all the objects present in our video:

- person with id 1.0 was present in the video for 0 days 00:01:34.273634 from 2023-11-08 03:24:55.866030 to 2023-11 2

- person with id 2.0 was present in the video for 0 days 00:00:52.874862 from 2023-11-08 03:24:55.866030 to 2023-11 3

- person with id 3.0 was present in the video for 0 days 00:01:02.194742 from 2023-11-08 03:24:55.866030 to 2023-11 4

- person with id 4.0 was present in the video for 0 days 00:00:13.343196 from 2023-11-08 03:24:55.866030 to 2023-11 5

- person with id 5.0 was present in the video for 0 days 00:00:12.491371 from 2023-11-08 03:24:55.866030 to 2023-11 6

- person with id 6.0 was present in the video for 0 days 00:00:37.937545 from 2023-11-08 03:24:55.866030 to 2023-11 7

- person with id 7.0 was present in the video for 0 days 00:00:12.994483 from 2023-11-08 03:24:55.866030 to 2023-11

- car with id 8.0 was present in the video for 0 days 00:00:05.370814 from 2023-11-08 03:24:55.866030 to 2023-11-08 9

- car with id 9.0 was present in the video for 0 days 00:00:01.655127 from 2023-11-08 03:24:55.866030 to 2023-11-08

How to run YOLOv8 on SaladCloud’s GPUs

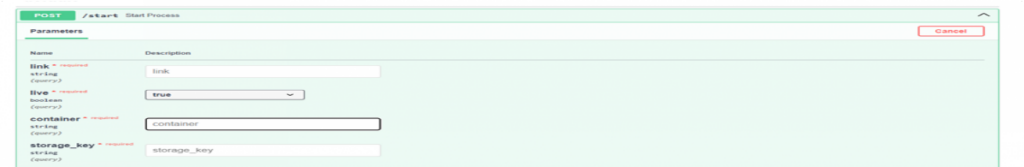

We introduced a FastAPI with a dual role: it processes video streams in real time and offers interactive documentation via Swagger UI. You can process live streams from YouTube, RTSP, RTMP, and TCP, as well as regular videos. All the results will be saved in an Azure storage account you specify. All you need to do is send an API call with the video link, check if the video is a live stream or not, and store account information and timeframes of how often you want to save the results. We also integrated multithreading capabilities, allowing multiple video streams to be processed simultaneously.

Deploying on SaladCloud

In our step-by-step guide, you can go through the full deployment journey on SaladCloud. We configured container groups, set up efficient networking, and ensured secure access. Deploying the FastAPI application on SaladCloud proved to be not just technically feasible but also cost-effective, highlighting the platform’s efficiency.

Price comparison: Processing live streams and videos on Azure and SaladCloud

When it comes to deploying object detection models, especially for tasks like processing live streams and videos, understanding the cost implications of different cloud services is crucial. Let’s do some price comparison for our live stream object detection project:

Context and Considerations

Live Stream Processing: Live streams are unique in that they can only be processed as the data is received. Even with the best GPUs, the processing is limited to the current feed rate.

Azure’s Real-Time Endpoint: We assume the use of an ML Studio real-time endpoint in Azure for a fair comparison. This setup aligns with a synchronous process that doesn’t require a fully dedicated VM.

Azure Pricing Overview

We will now compare the compute prices in Azure and SaladCloud. Note that in Azure you can not pick RAM, vCpu and GPU memory separately. You can only pick preconfigured computes. With SaladCloud, you can pick exactly what you need.

Lowest GPU Compute in Azure: For our price comparison, we’ll start by looking at Azure’s lowest GPU compute price, keeping in mind the closest model to our solution is YOLOv5.

1. Processing a Live Stream

| Service | Configuration | Cost per hour | Remarks |

|---|---|---|---|

| Azure | 4 core, 16GB RAM (No GPU) | $0.19 | General purpose compute, no dedicated GPU |

| SaladCloud | 4 vCores, 16GB RAM | $0.032 | Equivalent to Azure’s general compute |

Percentage Cost Difference for General Compute

SaladCloud is approximately 83% cheaper than Azure for general compute configurations.

2. Processing with GPU Support. This is the GPU Azure recommends for yolov5.

| Service | Configuration | Cost per hour | Remarks |

|---|---|---|---|

| Azure | NC16as_T4_v3 (16 vCPU, 110GB RAM, 1 GPU) | $1.20 | Recommended for YOLOv5 |

| SaladCloud | Equivalent GPU Configuration | $0.326 | SaladCloud’s equivalent GPU offering |

Percentage Cost Difference for GPU Compute

SaladCloud is approximately 73% cheaper than Azure for similar GPU configurations.

YOLOv8 deployment on GPUs in just a few clicks

You can deploy YOLOv8 in production on SaladCloud’s GPUs in just a few clicks. Simply download the code from our GitHub repository or pull our ready-to-deploy Docker container from the SaladCloud Portal.

It’s as straightforward as it sounds – download, deploy, and you’re on your way to exploring the capabilities of YOLOv8 in real-world scenarios. Check out SaladCloud documentation for quick guides on how to start using our batch or synchronous solutions.

Check out our step-by-step guide

To get a comprehensive step-by-step guide on how to deploy YOLOv8 on SaladCloud, check out our step-by-step guide here. In this guide, we will show:

- Reference architecture

- How to develop a fast API for real-time processing

- Setting up batch processing for asynchronous workloads

- Testing in a local environment

- Processing live video streams from Youtube

- Processing live video streams from RTSP, RTMP, TCP, IP address

- Implementing object tracking

- Running process on GPUs

- Storing object detection results in Azure storage

This process is fully customizable to your needs. Follow along, make modifications, and experiment to your heart’s content. Our guide is designed to be flexible, allowing you to adjust and enhance the deployment of YOLOv8 according to your project requirements or curiosity.

We are excited about the potential enhancements and extensions of this project. Future considerations include broadening cloud integrations, delving into custom model training, and exploring batch processing capabilities.

SaladCloud is the world’s largest distributed cloud computing network with 11,000+ daily GPUs and 450,000 GPUs contributing compute, all at the lowest cost in the market.