Government Science on Citizen Machines – Why Distributed Cloud is the Way

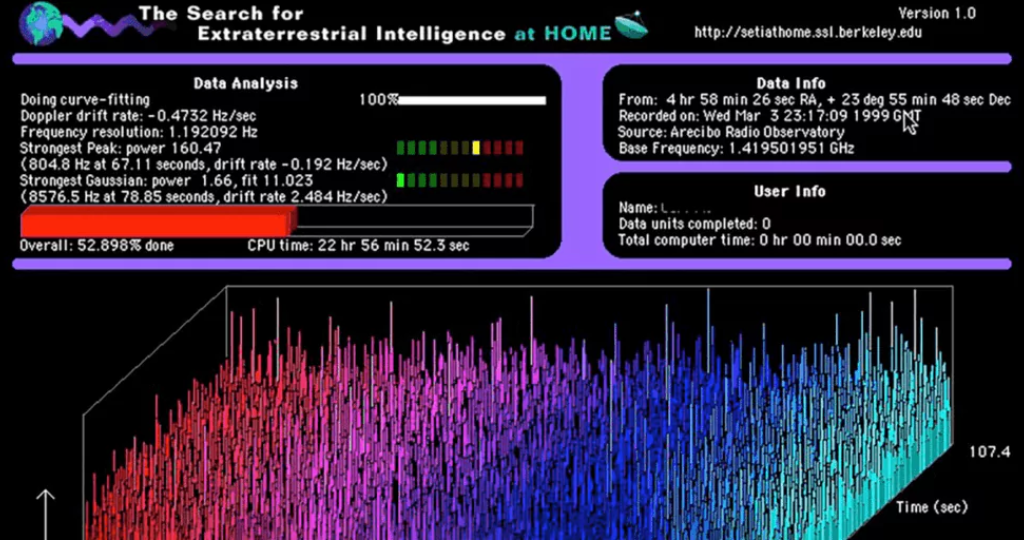

Every year, private industry overlooks hundreds of potentially groundbreaking scientific computing projects, either due to the upfront cost or uncertain prospects for profitability. One of the most affected areas is what’s known as “fundamental research”—exactly the kind of rigorous theory testing required to better understand natural phenomena, but which usually doesn’t translate to short-term returns. That means the burden of advancing human progress often falls on the taxpayers through federal research grants. Government science already runs on citizen dollars. If we ran it on citizen machines on a distributed cloud, we could conduct life-changing research and return some of the taxpayers’ hard-earned income. The Compute-Industrial Complex Whether they know it or not, taxpayers have always been instrumental to pushing the boundaries of science. Today’s most robust computer modeling is only made possible through public funds. Without these resources, scientists could never study climate change on a digital twin of the Earth, or simulate the young universe to grok the Big Bang. Humanity owes a large part of its technological legacy to the continuous give-and-take between public sector research and private sector development. Entire multinational industries derive their lifeblood from scientific discoveries promulgated by the world’s governments. And though we may benefit from the resulting products and services, everyday people like you and me are footing the bill at both ends. In government-administered computing projects, the burden on taxpayers is unnecessarily compounded by infrastructure; the bulk of public compute-assisted research funding goes to building the kinds of supercomputers necessary to actually do the work. Supercomputing clusters are complex, individualized systems where the speed of data transfer is just as vital as the total processing power. Colocating and networking processing hardware together with high-throughput interconnects helps maintain the rate of data flow, but this introduces additional, often expensive considerations like cooling and redundant energy supplies. Traditional data centers also need to contend with the hardware upgrade lifecycle; without regular upgrades, many supercomputers become obsolete within five years. Only a handful of companies (such as HPE or IBM) have access to both the expertise and the relationships with hardware suppliers required to design and build massive supercomputing infrastructure. Other renowned private enterprises may specialize in building these types of systems under contract, but neither their services nor the underlying hardware come cheap. Citizen Science Isn’t New Given the exponential pace of computer development, it makes sense that the majority of today’s fundamental research should incorporate sophisticated technology. But did you know that distributed networks of heterogeneous consumer hardware can act as cloud layers that are equally performant and more cost-effective than traditional data centers? Consumer-supported computing allows individual users to connect their PCs to a distributed network in order to process discrete parts of a larger workload. With a well-architected system in place, anyone with a reasonably powerful computer1 and access to the Internet could support cutting-edge research like protein synthesis or climate modeling right from home. There are also good reasons to think that private citizens would be more than willing to do so. Do you recall seeing monitors in your school computer lab displaying colorful charts like these? That was none other than SETI@home, a crowdsourced research application created by the Search for Extraterrestrial Intelligence (SETI) in hopes of identifying anomalous events that may originate from our intergalactic neighbors. With thousands of connected individuals lending processing power to SETI’s workloads, researchers were able to analyze a massive collection of radio wave emissions originally detected by the Arecibo and Green Bank radio telescopes. SETI@home established one of the first successful implementations of consumer-supported distributed computing at scale. In March 2020, SETI shut down the project after 21 years of operation because it was too successful; network participants had processed such an overwhelming volume of data that astronomers would need years to cross-reference the results for signs of extraterrestrial life. Following their example, researchers at Berkeley developed the Berkeley Open Infrastructure for Network Computing (BOINC) platform. BOINC permits academic researchers to upload programs and data as workloads for distributed processing on a consumer-supported network, where participants—private individuals running the BOINC desktop client on their personal computers—can voluntarily share resources with research that interests them.2 Since its founding, the BOINC platform has gone on to support SETI@home and a multitude of other fundamental research projects in various scientific fields. These projects have proven that consumer-supported, distributed networks can be leveraged for scientific applications. With the right incentives to participate, I believe we can reduce the typical fundamental research budget to a trivial expense. A Problem of Incentive The world’s latent processing reserves are perfect for the types of government-administered, scientific research projects that usually require expensive supercomputers.3 Since the hardware has been purchased by private consumers, there are fewer concerns about budgeting for expensive upgrades simply to reach a current hardware generation or speed up your 3D-accelerated processing. Distributed networks like BOINC and SETI@home demonstrated just how readily millions might choose to aid a scientific effort out of enthusiasm alone (an exciting data point for those managing research grants at the NSF, to say the least). All that’s left is networking those devices in a way that allows dynamic allocation of their shared resources—but accomplishing that requires solving a problem endemic to contemporary academic science. How do you motivate enough participants to conduct the research? Of hundreds of vital research projects listed on the BOINC platform at any given time, only a thankful few garner sufficient interest or processing supply to achieve their desired outcomes, while sexier projects—like curing COVID-19, or dialing up the nearest spacefaring civilization—attract supporters in droves. Far too often, high-profile workloads overshadow equally important research in niche fields that doesn’t make for a good headline. When forced to compete for mindshare, scientists must effectively moonlight as marketers simply to conduct their research. But what if you could engage a whole nation of users with a modest incentive? At Salad Technologies, we’ve built a distributed cloud network based on a mutual reward model we call computesharing. The Computesharing Model Most fundamental research projects require public funds and private computing infrastructure, but the actual computations are done on processors identical to those

Perfect Device Fingerprinting at Scale

The Idea The Web Scraping Clubs’ recent series on device fingerprinting has been making the rounds here at Salad, and this got the team and I very excited! Pierluigi Vinciguerra, co-founder of databoutique.com, outlined how device fingerprinting is used for cookie-free device tracking and bot detection, both being key inputs to block web scraping operations at scale. To effectively and consistently collect a large volume of data, you need to spoof device fingerprints to bypass these systems…that is, unless you’re collecting this data from real-world consumer devices! At Salad, we’ve already built a global network of tens of thousands of residential PCs to lower the cost of cloud computing. Could our infrastructure help solve the problem of device fingerprinting for web scraping companies, simply by making spoofing unnecessary? After all, PCs on the Salad network already have the exact hardware that web scraping developers are going to great lengths to emulate. Better yet, our compute costs are a fraction of major cloud providers and each node comes with high-quality residential IPs inbuilt! When we decided to put it to the test, our hunch proved correct: 100% of the 1000+ unique Salad nodes we tested passed both the fingerprinting test and ‘Harrods’ test. Read on to learn how we did it, and how to use our network to power your own web scraping at scale. Skipping the Spoof Instead of spoofing device hardware, IP address, and other features that are used to detect a bot, we decided to use what we already have: our compute network. The Salad Network consists of tens of thousands of unique consumer PCs (or “nodes”), concurrently connected to our centralized servers and receiving containerized workloads to run based on characteristics of the nodes themselves. We compensate the owners of these nodes for sharing their compute resources. Because these nodes typically have powerful GPUs attached, most of the workloads currently running on the network are for generative AI companies. However, we suspected these nodes would work well for the web scraping use case because they typically have: We already know what hardware is on each machine, what their average internet speeds are, and their IP quality. With that in mind, we set up and conducted two tests to verify point #3 – that the hardware on these nodes would obviate the need for device hardware spoofing. Testing Step 1. Target selection We had two objectives for these tests, to better understand how our network would perform for a web scraping use case: For the first test, we decided to use GoLogin’s browser fingerprinting test. GoLogin provides a product for customizing the hardware and software fingerprint that you want to send to a target, but they also offer a free online fingerprinting test that looks at a wide range of browser- and device- level characteristics across the following categories: For the real-world test, we crawled the Italian homepage of Harrod’s ecommerce site and the first 8 pages of results in the ‘Womens’ section. Step 2. Create Playwright script Here, we’re using firefox in headless mode, targeting the first destination (gologin) and screenshotting the result. The script is saved to tests_pl_firefox.py, and we extended our script to upload the images to a central location so they could be reviewed. Here’s the script we used for the gologin test: Step 3. Create Dockerfile We used the official Playwright docker container hosted on the azure container directory at http://mcr.microsoft.com/playwright:v1.34.3. Step 4. Deploy We created an organization in the Salad Container Engine and a container group which pointed at the source image detailed in Step 3. Then we configured the container group to be deployed to 1000 nodes, each with 1 vCPU and 1GB RAM. The total cost of the test was a few cents. Results The most time-consuming part of the test, by far, was reviewing all of the screenshots that we collected! If we ran this test again, we’d just update the script to extract certain page components and return a score – that’s probably what a real web scraping expert would do. Despite all the extra work we had to do, the results were encouraging: 100% of nodes were detected as ‘clean’ by gologin across all measured device features, and 100% of nodes returned complete results pages from the live test of harrods.com. Web scraping results from GoLogin using Salad This means that we achieved a 100% scraping success rate without any attempt to spoof device fingerprint. Granted, this was only conducted against two sites, and only at a single point in time – but it bodes well for future investigation by ourselves and our web scraping partners. While encouraging, these results were unsurprising – it just makes sense that using consumer PCs with a residential internet connection for scraping would avoid the need for spoofing device hardware and IP, because these are exactly the devices that web scraping developers attempt to emulate. If you’d like to learn more about Salad’s global network of computing nodes, want to replicate our tests, experiment with your own scraping workload, or just chat webscraping, check us out here! We’d love to hear from you.